Gates Foundation, IHEP to develop a national metrics framework to factor in today’s “post-traditional” students.

Education bigwigs are currently designing a national metrics framework that will allow for the inclusion of data about today’s evolving higher-ed student. But are college and university leaders prepared to step outside of their on-campus analytics comfort zone and into national, standardized guidelines?

Education bigwigs are currently designing a national metrics framework that will allow for the inclusion of data about today’s evolving higher-ed student. But are college and university leaders prepared to step outside of their on-campus analytics comfort zone and into national, standardized guidelines?

It’s easier to understand the new metrics framework in context of the Integrated Postsecondary Education Data System (IPEDS): IPEDS aims to help institutions track student data, such as time to completion, completion rates, student loan information and types of degrees completed. But much of this data, notes the Bill & Melinda Gates Foundation, is collected around the idea of a “traditional” student (starts college in the fall after high school graduation, goes to a four-year on-campus institution, doesn’t transfer, etc.).

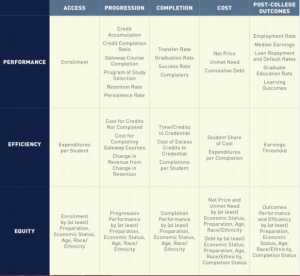

The new metrics framework–the brainchild of the Foundation and the Institute for Higher Education Policy (IHEP)–aims to be something akin to IPEDS 2.0: sets of metrics on “post-traditional” students related to student access, progression, completion, cost, post-college outcomes, institutional performance in relation to resources (efficiency), and performance in respect to diverse populations (equity).

A Field-Driven Metrics Framework

“The metrics published today often only include ‘traditional’ students and ignore the new normal in higher education: ‘post-traditional’ students attending college—or colleges—in new ways en route to their credentials,” writes the report’s author, Dr. Jennifer Engle, senior program officer at The Gates Foundation .

“One example that comes to mind is of my daughter,” said Casey Green, founding director of The Campus Computing Project. “She started at one college, transferred to another, and then transferred a third time. For those institutions, not only is she hard to track in terms of completion and progression, but which institution gets credit for her graduation? So far, no one’s been able to figure out how to track these kinds of metrics.”

According to the Foundation’s report on the new metrics framework, there are many other specific questions that must be answered for today’s prospective student and their families; including:

- How many “post-traditional” students—the low income, first-generation, adult, transfer, and part-time students who make up the new majority on today’s campuses—attend college? Do they reach graduation and how long does it take them?

- Do the students who don’t graduate transfer to other college and earn credentials, or do they drop out completely?

- Are students gaining employment in their chosen field after attending college, and how much do they earn?

- How are graduates using their knowledge and skill to contribute to their communities?

However, though the new metrics framework could prove invaluable to prospective students, are college and university leaders ready and willing to agree to a national, standardized data framework?

(Next page: Changing the data and metrics culture)

It’s All About the Presentation

Emphasized heavily in the Foundation’s report, some “vanguard” institutions have already begun collecting data on these “post-traditional” metrics, and these practices are helping to inform and shape the Foundation and IHEP’s framework.

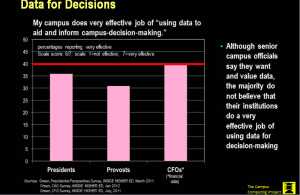

And according to the Campus Computing Project’s own data, Presidents, Provosts and CFOs also willingly admit that they’d like to do a better job of using data to aid and inform campus-decision making:

The issue, however, is that though innovative universities are beginning to use data and analytics to inform on-campus decision making, collecting and moving that data onto a national platform is intimidating…and often invokes a sense of foreboding thanks to K-12’s experience with outcomes standardization.

“It becomes an issue of taking it beyond the walled city, as it were,” explained Green. “Higher education is increasingly moving toward a culture of evidence at its foundation, but we don’t have a foundation that is widespread.”

And that’s in large part due to what Green described (and most in education know to be true) as a culture of blame.

“There’s a long history of using data as a weapon and not as a resource, especially against HBCUs,” he explained. “But if analytics are ever going to reach their potential to better student outcomes and make institutions more efficient, data has to move from cup to lip. It has to become a cup-to-lip movement.”

Green noted that in order to change the culture from “what you did wrong” to “how we do better,” the four T’s should be taken into consideration: Transparency (in the data collected and why), Training (in how to use the metrics and its tools), Trust (support and a positive outlook), and Tools (software and skills).

“Right now, the four T’s of analytics can be used in the micro sense for on-campus operational implementation success. But they should also be used on the macro level for the framework discussed by the Foundation and IHEP to make that a national success,” concluded Green.

IHEP will release a paper in the coming months with detailed recommendations for definitions of the metrics in the framework, adopting shared definitions from the field where there is consensus, while identifying where and why there are still divergent viewpoints. IHEP will also continue the conversation about postsecondary data and systems through the Postsecondary Data Collaborative—a coalition of nearly three dozen organizations seeking to improve data quality, transparency and use.

For much more detailed information on the framework, its core design principles, and best practices from institutions who lent their expertise to the Foundation for their development of the framework, read the full report “Answering the Call: Institutions and States Lead the Way Toward Better Measures of Postsecondary Performance.”

- 25 education trends for 2018 - January 1, 2018

- IT #1: 6 essential technologies on the higher ed horizon - December 27, 2017

- #3: 3 big ways today’s college students are different from just a decade ago - December 27, 2017