CIO uses customizable analytics engine to make data usable for the University’s specific goals.

Analytics technology, like MOOCs, soared in popularity within the last few years. But just like MOOCs, many institutions have found that the key to success is not in the technology itself, but in harnessing the capabilities of that technology to promote the university’s unique mission and goals.

Analytics technology, like MOOCs, soared in popularity within the last few years. But just like MOOCs, many institutions have found that the key to success is not in the technology itself, but in harnessing the capabilities of that technology to promote the university’s unique mission and goals.

The concept of analytics has become an interwoven fabric of the higher education tapestry. For years, institutions have amassed myriad data on student admission and retention, student GPA, and demographics; but, outside of data being used for budgetary consideration, or as a competitive metric against other institutions, higher education has only recently begun to harness the true power of predictive analytics and big-data mining for the purpose of improving the academic success rates of their students.

That’s because in 2010 there was excited talk of looking to data-driven predictive analytics models of large companies like Amazon and retooling them for a higher education context. The ubiquity of online courses and testing, e-textbooks, and social media meant that institutions were sitting on a mountain of capture-able digital data. At issue, though, was if it made sense to take something from businesses which, at their core, spring from a common culture and speak a similar language, and apply it to higher education—a space comprised of disparate institutional cultures that does not lend itself to a one-size fits all big data model.

Analytics are not a one-size-fits-all solution, say software experts and innovative colleges and universities. Every institution, through its missions and goals, is unique. So why aren’t metrics within analytics solutions as diverse as its users?

There is more benefit to building an analytics model from the ground up within a unique campus environment than in forcing an institution to conform to a standard model, says one innovative University CIO.

Enter LoudCloud and Grand Canyon University.

“We base our work on the philosophy that there is no one magic formula that can predict retention across all levels,” explained Greg Harp, chief marketing officer of LoudCloud. “A Harvard University student is going to have different learning characteristics than, say, a student at a community college. However, using technological and behavioral analytics, we can create a system that indicates, and helps support, unique outcomes.”

(Next page: Measuring everything and the importance of slimming it down)

Founded in 2010 by Manoj Kutty, LoudCloud Systems was created with the goal of constructing an education management platform that focused on using behavioral analytics to improve student and teacher outcomes while not forcing data conformity on institutions that adopt the system.

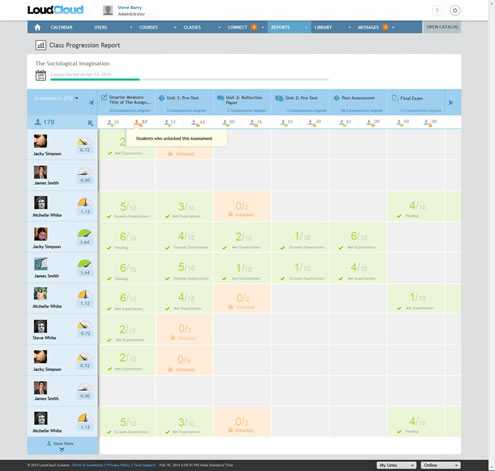

Comprised of tools like LoudSight, LoudCloud’s student and faculty reports dashboard, and LoudBook, an interactive e-reader that can be used to measure student participation and understanding of subject matter, LoudCloud aims to provide meaningful, actionable data that, because of its close work with faculty and administration, is tailor-made for its environment.

Grand Canyon University was one of the earliest adopters of the LoudCloud platform when it was introduced in 2010.

“We were looking for a new LMS,” recalled Joe Mildenhall, GCU’s CIO. “We’d been on Blackboard and had switched to Angel Learning, and we weren’t happy with the information coming out of the system. We were [also] looking because of some of the failures that we had in our current platforms, [such as] the ability to scale, and run a student body of over 20,000 really active online learners.”

GCU has a learning model where students heavily participate on an online platform, so having a platform that could [scale] reliably was a challenge for the University, said Mildenhall.

“The other piece we were looking for was consistent analytics coming out of the system. The prior systems viewed each course as a separate entity, and they [didn’t] allow you to tie things across courses very easily…student progress, how often they’re participating, what they’re doing-that was one of the things we were looking for: A system where we could monitor more of what was going on in the platform and then make use of it both to help students be successful as well as monitoring the quality of the educational experience we were delivering.”

When asked what was wrong with the data that previous systems had produced, Mildenhall replied, “It wasn’t well-formed data. You couldn’t say ‘OK, what’s the mid-course progress grade across all the courses currently being taught’ and there just weren’t things being measured. [LoudCloud] started with a great foundation [in that] they measured just about everything.”

Of course, it is not simply good enough to measure every conceivable data point; a data stream is useless if it cannot be presented in a manner that is meaningful to those that need it, especially if those in need are faculty members who cannot afford to waste their already limited time, or the time of their students, having to find ways to interpret the data they are getting.

As Kutty explains, “We really began by thinking ‘what would an instructor want?’ Faculty have limited time so working on a platform that’s intuitive and above all else, immediately useful, is critical. We tried to answer these three questions with LoudSight™ : 1) How can it improve classroom materials? 2) How does it ensure spending time most effectively; meaning, how does it help the students most at-risk? And 3) How can the data help with an educator’s own professional development?”

(Next page: How an educator-focused analytics engine works)

“The real educator-focused characteristic of LoudSight is that the user can choose which data points he or she is interested in,” added Harp; “for example, content students accessed, forum posts for classwork, cumulative class scores, etc. The system itself chooses the options available using aggregated data within the institution that signals indicators, or triggers, of student engagement and retention. These indicators can be differentiated by institution and even course by course. We tried to limit the data reports by four on the educator’s dashboard to better visualize key points they want to focus on.”

“You can also break the data down to small actionable points,” explains Kutty. “Educators can correct curriculum instantly because the platform has the ability to break down individual course work or test questions by analyzing metrics such as: how many students got the questions right or wrong, how long students spent on the question, and how many answered the question.”

However, Kutty noted that, in general, the system tries to have minimum data for individual educators to wade through, keeping more configurable indices for the admin level. “Keeping complexity for the higher-ups and simplicity for folks on the ground makes student data, which is inherently messy, organized.”

What Mildenhall and the administration at GCU have found beneficial with LoudCloud is that it allows them to not just interpret the data being captured but to act upon the data in an effective manner.

“We actually trigger automatic actions based on the data, and that’s the transition that people need to have,” says Mildenhall. “We’ll watch the participation of the students in the classroom, and if that participation level drops below what we’ve defined as a threshold we put an action in our academic counseling work queue to say ‘follow up with this person. Their activity seems to have dropped below this threshold.’ We’ll [also] establish an expectation with our faculty that we want papers graded in so many days, and we’ll watch that, and because of the data coming out of LoudCloud, we can say ‘ok, here’s a faculty [member] that is outside of the tolerances’ and so we’ll have a note for follow up with the faculty.”

Seen here, is an example of a ‘Class Progression Report’ from the LoudSight Dashboard. Tracking information, as well as drill-down menus, for each student is presented:

When asked by eCampus News if his experience with LoudCloud would incline him to recommend the system to other institutions, Mildenhall’s response was an emphatic, “Yes, definitely.”

“Number one, they have a different window into the classroom which our students and faculty responded very well to as far as making it intuitive and easy to use; [the] second thing is it really provides that wealth of information out of the experience so you can go just as far as you want to in using that information to improve the academic experience and the students’ success.”

Doug Walker is an editorial freelancer with eCampus News.

- 25 education trends for 2018 - January 1, 2018

- IT #1: 6 essential technologies on the higher ed horizon - December 27, 2017

- #3: 3 big ways today’s college students are different from just a decade ago - December 27, 2017